Artificial intelligence shouldn’t be a “black box.” Get answers to the most common questions business leaders ask before fully committing a data science team to a business problem.

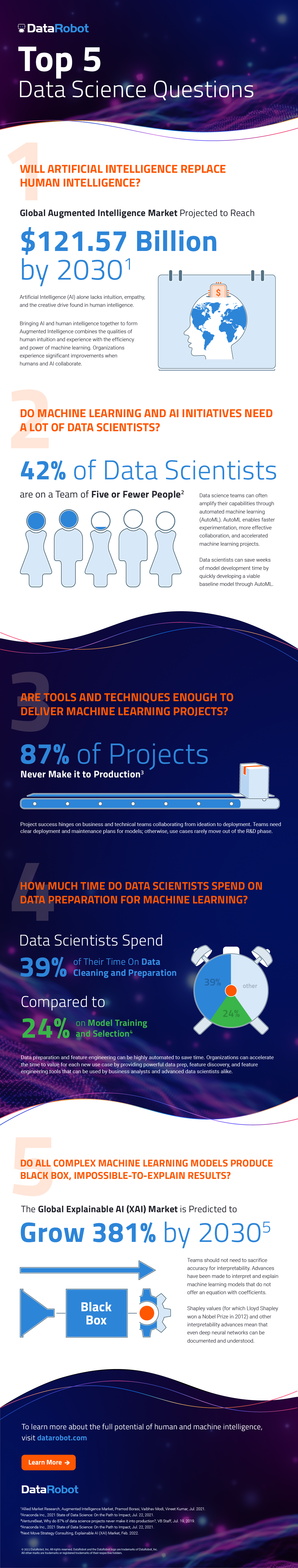

1. Will Artificial Intelligence Replace Human Intelligence?

- Global augmented intelligence market projected to reach $121.57 billion by 2030.1

- Artificial intelligence (AI) alone lacks intuition, empathy, and the creative drive found in human intelligence. Bringing AI and human intelligence together to form Augmented Intelligence combines the qualities of human intuition and experience with the efficiency and power of machine learning. Organizations experience significant improvements when humans and AI collaborate.

2. Do Machine Learning and AI Initiatives Need a Lot of Data Scientists?

- 42% of data scientists are solo practitioners or are on a team of five or fewer people.2

- Data science teams can often amplify their capabilities through automated machine learning (AutoML). AutoML enables faster experimentation, more effective collaboration, and accelerated machine learning projects. Data scientists can save weeks of model development time by quickly developing a viable baseline model through AutoML.

3. Are Tools and Techniques Enough to Deliver Machine Learning Projects?

- 87% of projects never make it to production.3

- Project success hinges on business and technical teams collaborating from ideation to deployment. Teams need clear deployment and maintenance plans for models; otherwise, use cases rarely move out of the R&D phase.

4. How Much Time Do Data Scientists Spend on Data Preparation for Machine Learning?

- Data scientists spend 39% of their time on data cleaning and preparation compared to 24% on model training and selection.4

- Data preparation and feature engineering can be highly automated to save time. Organizations can accelerate the time to value for each new use case by providing powerful data prep, feature discovery, and feature engineering tools that can be used by business analysts and advanced data scientists alike.

5. Do All Complex Machine Learning Models Produce Black Box, Impossible-to-Explain Results?

- The Global Explainable AI (XAI) Market is predicted to grow 381% by 2030.5

- Teams should not need to sacrifice accuracy for interpretability. Advances have been made to interpret and explain machine learning models that do not offer an equation with coefficients. Shapley values (for which Lloyd Shapley won a Nobel Prize in 2012) and other interpretability advances mean that even deep neural networks can be documented and understood.

1Allied Market Research, Augmented Intelligence Market, Pramod Borasi, Vaibhav Modi, Vineet Kumar, Jul. 2021.

2Anaconda Inc., 2021 State of Data Science: On the Path to Impact, Jul. 22, 2021.

3VentureBeat, Why do 87% of data science projects never make it into production?, VB Staff, Jul. 19, 2019.

4Anaconda Inc., 2021 State of Data Science: On the Path to Impact, Jul. 22, 2021.

5Next Move Strategy Consulting, Explainable AI (XAI) Market, Feb. 2022.