AI for government agencies

Mission-critical AI.

Mission-critical AI.

Proven. Secure. Reliable.

Purpose-built for government agencies, DataRobot deploys across on-prem, hybrid, cloud, or fully air-gapped environments to meet the U.S. government’s strictest requirements.

Operational edge,

frontline impact

AI for government agencies

Mission-critical AI.

Mission-critical AI.

Proven. Secure. Reliable.

Purpose-built for government agencies, DataRobot deploys across on-prem, hybrid, cloud, or fully air-gapped environments to meet the U.S. government’s strictest requirements.

Operational edge,

frontline impact

- +FRONTLINE OPERATIONS

- +MISSION READINESS

- +FISCAL STEWARDSHIP

Frontline operations and situational intelligence

Integrated with SAP Defense & Security modules, DataRobot helps agencies keep assets and systems operational in the most demanding environments.

- Deliver real-time insights from complex data streams to accelerate mission decisions

- Summarize sensor and intelligence inputs into a single operational picture

- Orchestrate fast, coordinated actions across agencies as situations evolve

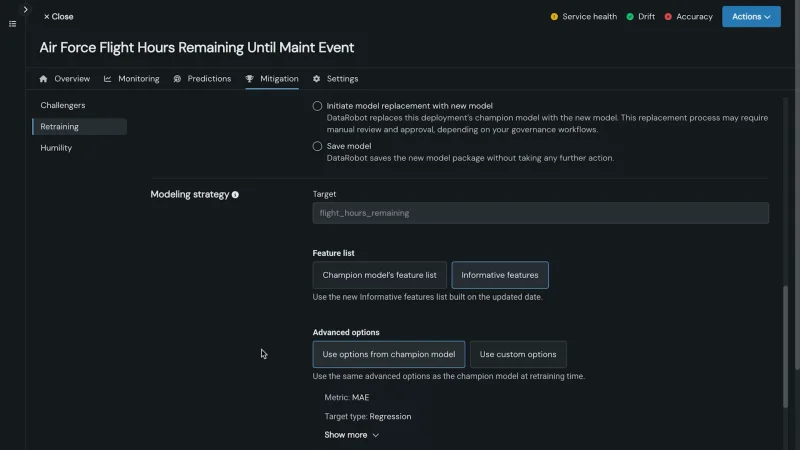

Ensure mission readiness

Anticipate needs, optimize training, and keep teams and systems ready when missions demand it.

- Forecast workforce and equipment readiness to prevent shortfalls

- Generate readiness summaries automatically

- Coordinate logistics and supply planning with agents

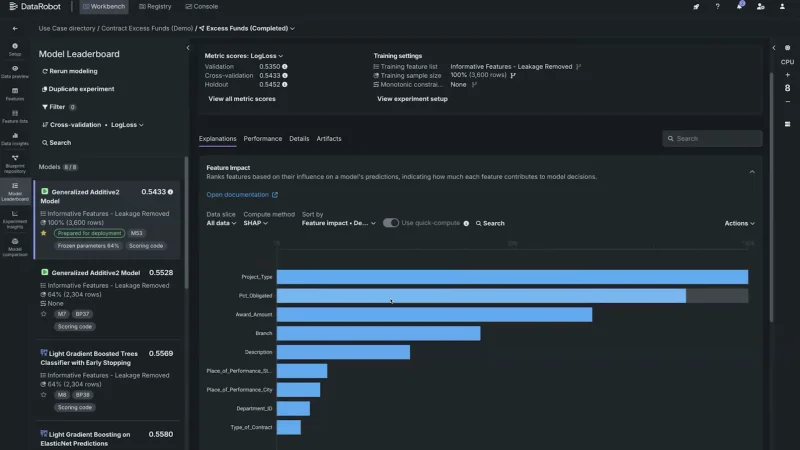

Strengthen fiscal stewardship and oversight

Integrated with SAP Ariba and S/4HANA, DataRobot helps agencies eliminate waste, reallocate funds to priority programs, and ensure every dollar is used effectively.

- Identify at-risk contracts and anomalous spend

- Automate budget, compliance, and audit reporting

- Reallocate funds in real time with agents

Across public and private sector programs, customers have reallocated billions and achieved $10M+ in annual savings by streamlining operations and improving fiscal efficiency.

MISSION-READY AI

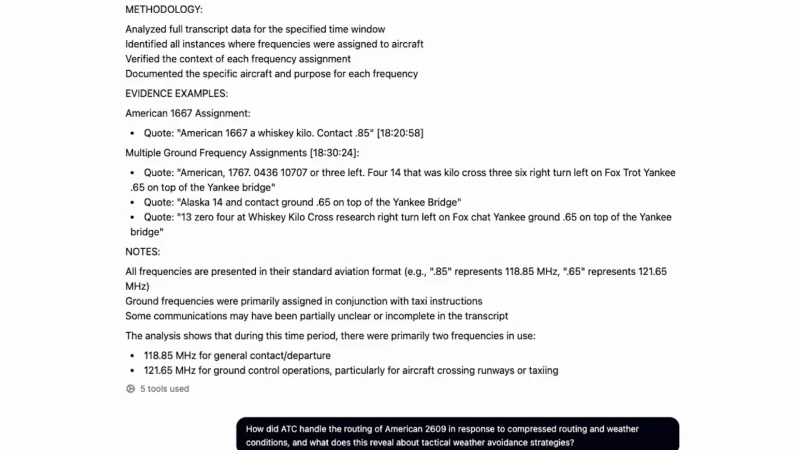

Radio intelligence agent

Radio intelligence

Real-time demodulation to extract audio, video, and data for edge AI analysis

MILITARY OPERATIONS

Voice

Data

Video

AI edge processing

Interactive agent

Auto-alerts based on content and provides multi-modal analysis of situation awareness

Build a timeline summary table of all comms at 485 MHz with each speaker in a separate column

Auto-alerts based on content and provides multi-modal analysis of situation awareness

Anticipate needs, optimize training, and keep teams and systems ready when missions demand it.

Proven across defense and civilian agencies

Leader in 2025 Gartner Magic Quadrant for Data Science and Machine Learning Platforms

2.4

K+

Staff hours saved

Automate mission tasks with ready-to-run AI applications, freeing teams to focus on higher-value priorities.

$

2.2

B

Resource flexibility

Identify and mitigate risks earlier with AI that strengthens oversight and accountability.

5

X

At-risk contracts detected

AI-powered insights identified surplus funds, enabling agencies to reallocate resources to priority programs and improve fiscal agility.

Platform compliance

DataRobot aligns with the strictest federal standards to support today’s high-security workloads — and tomorrow’s.

- NIST 800-53

- CMMC

- ITAR

- HIPAA

- NIST AI governance

- IL5 in Advana

Contract vehicles

Available on leading government-wide acquisition contracts:

- GSA MAS

- NASA SEWP V

- ITES-SW2

- AWS ICMP

- Tradewinds

- JWCC

Resources for government agencies

The future of AI in

government starts here

DataRobot brings predictive, generative, and agentic AI together in one secure platform—

ready for the government’s toughest challenges.