Deeper integration between DataRobot and Snowflake to help customers go from data to value even faster

In late 2018, DataRobot and Snowflake partnered to enable DataRobot users to ingest data from Snowflake’s cloud-based data platform in order to quickly scale and leverage DataRobot’s leading machine learning capabilities. While this was a great first step, we knew that what would be most impactful was a full circle experience for joint customers.

As such, DataRobot is very pleased to announce our latest integration with Snowflake aimed at sending prediction results back to Snowflake directly and easily, removing the need for scripts, technical development, or other steps for getting results back to the data warehouse and into your organization’s systems. The result is closer to a seamless movement of data between DataRobot and Snowflake.

How it Works

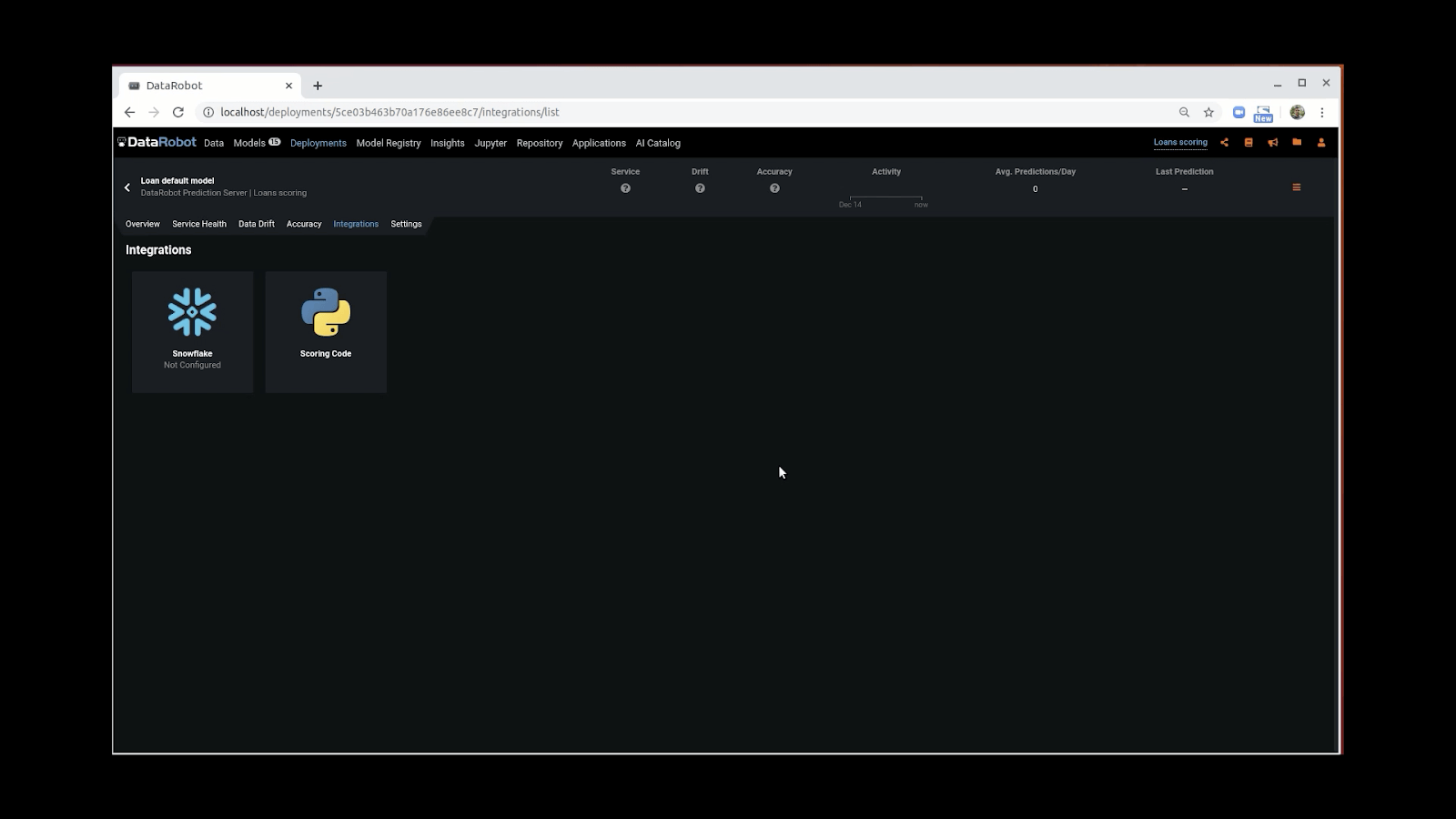

DataRobot users begin with the Integrations tab in the app, and then they are guided through four steps, selecting whether to include Explanations and specifying the destination for the predictions. Use this feature to create a new job or edit your existing jobs.

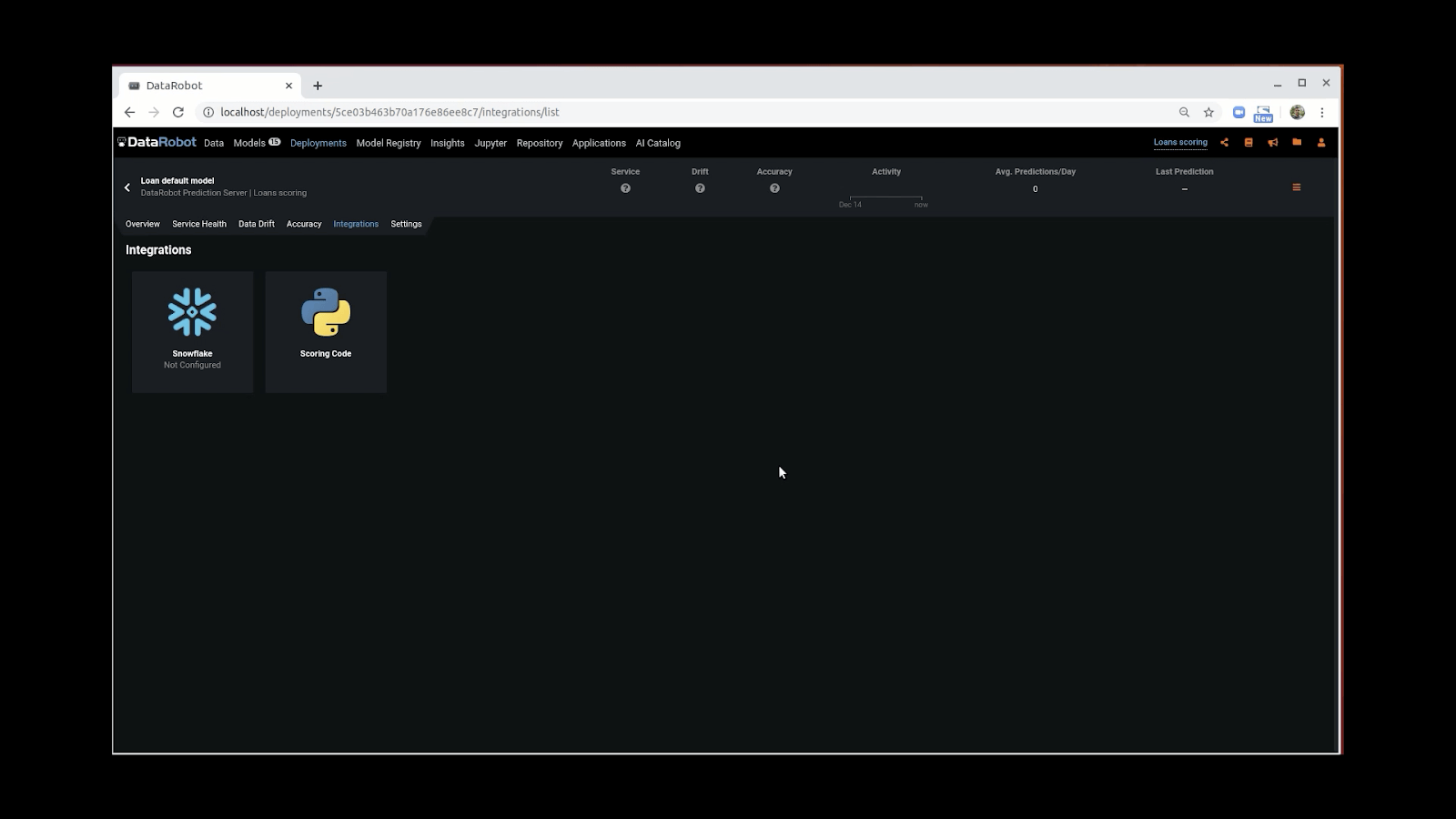

From the Deployments tab, users will go to the Snowflake logo tile on the Integrations screen to set up their job:

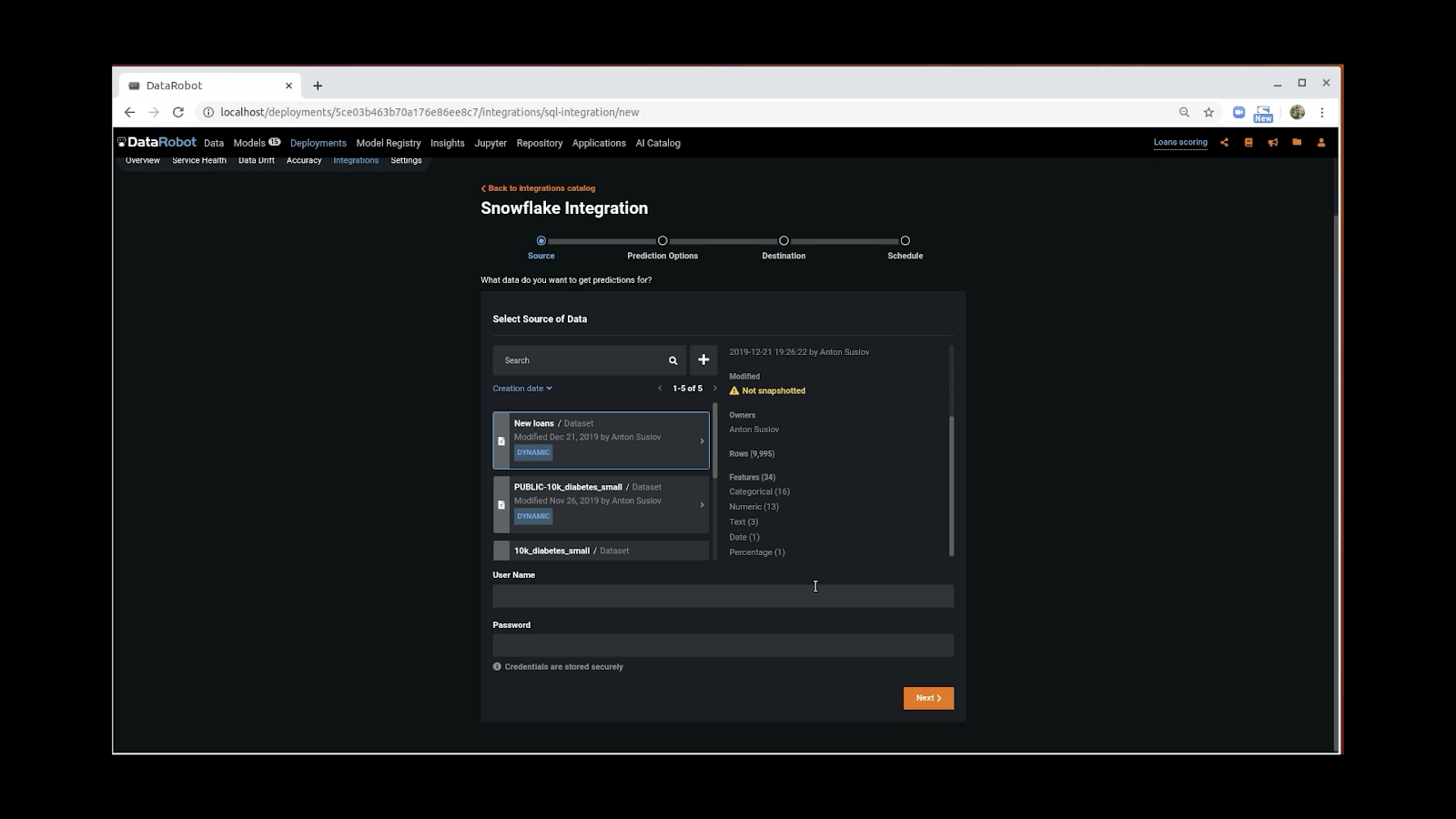

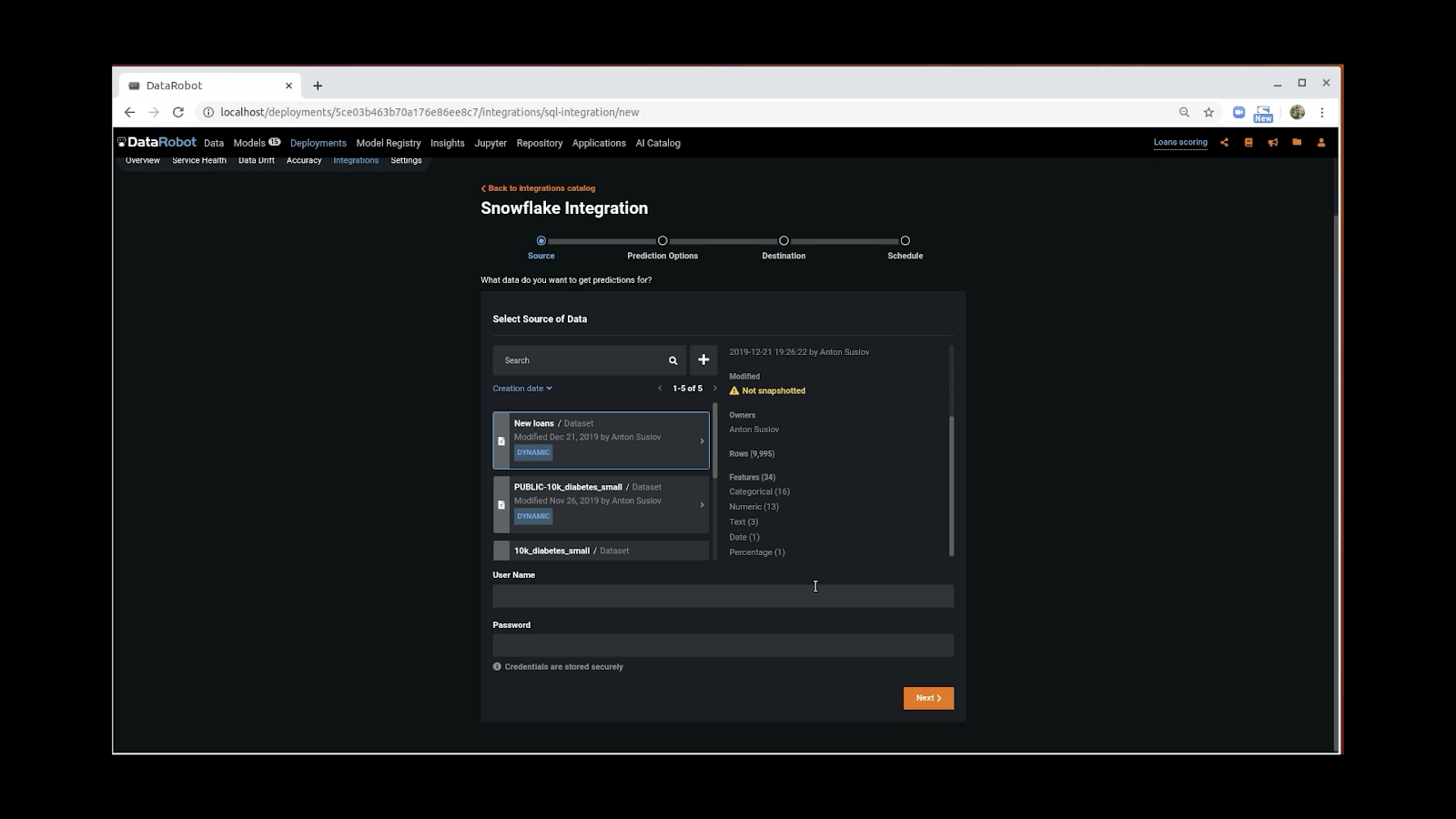

Next, users configure their job, beginning with specifying a Snowflake Data Source in the Catalog and providing credentials in order to begin scoring the data with their model:

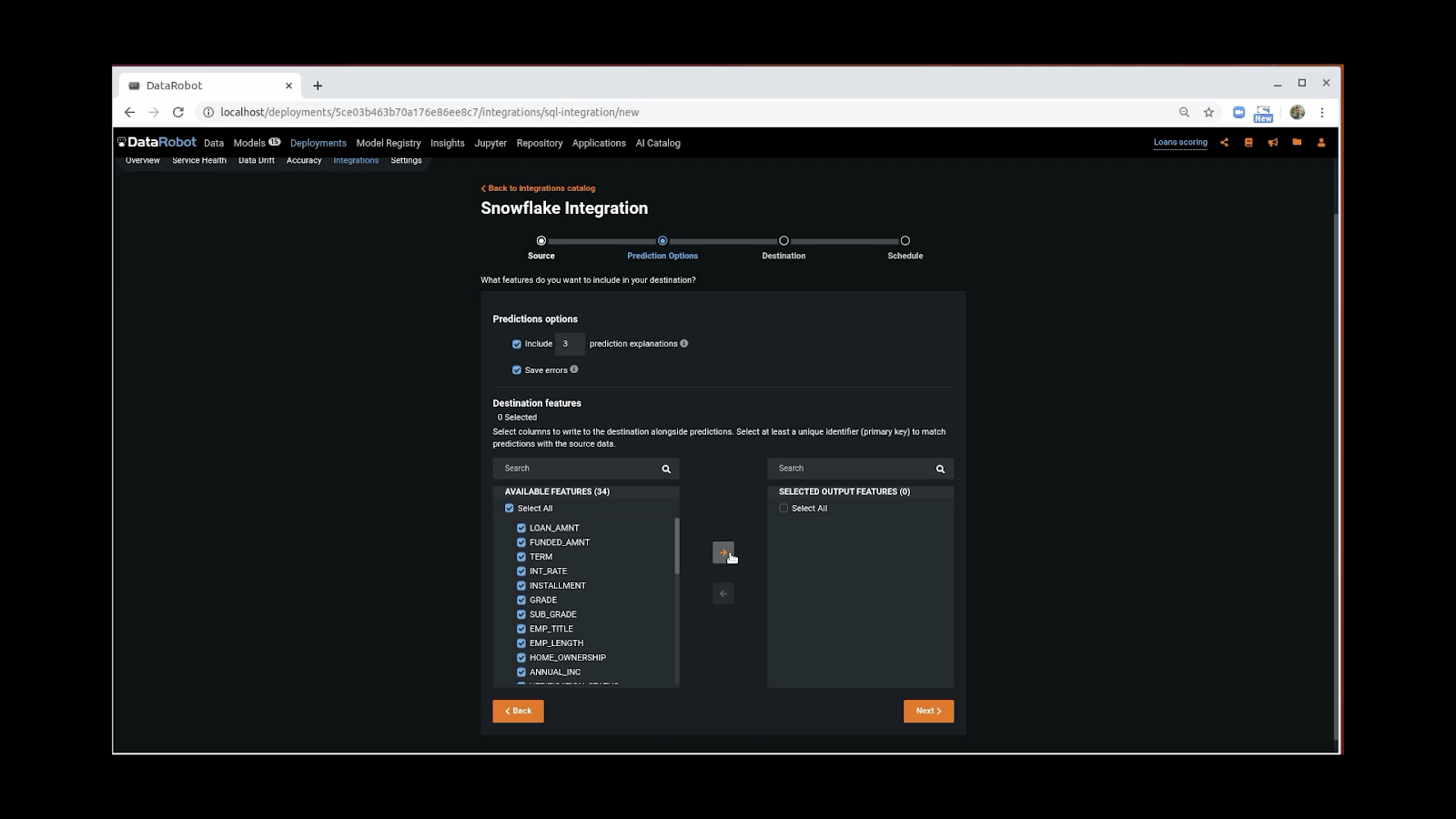

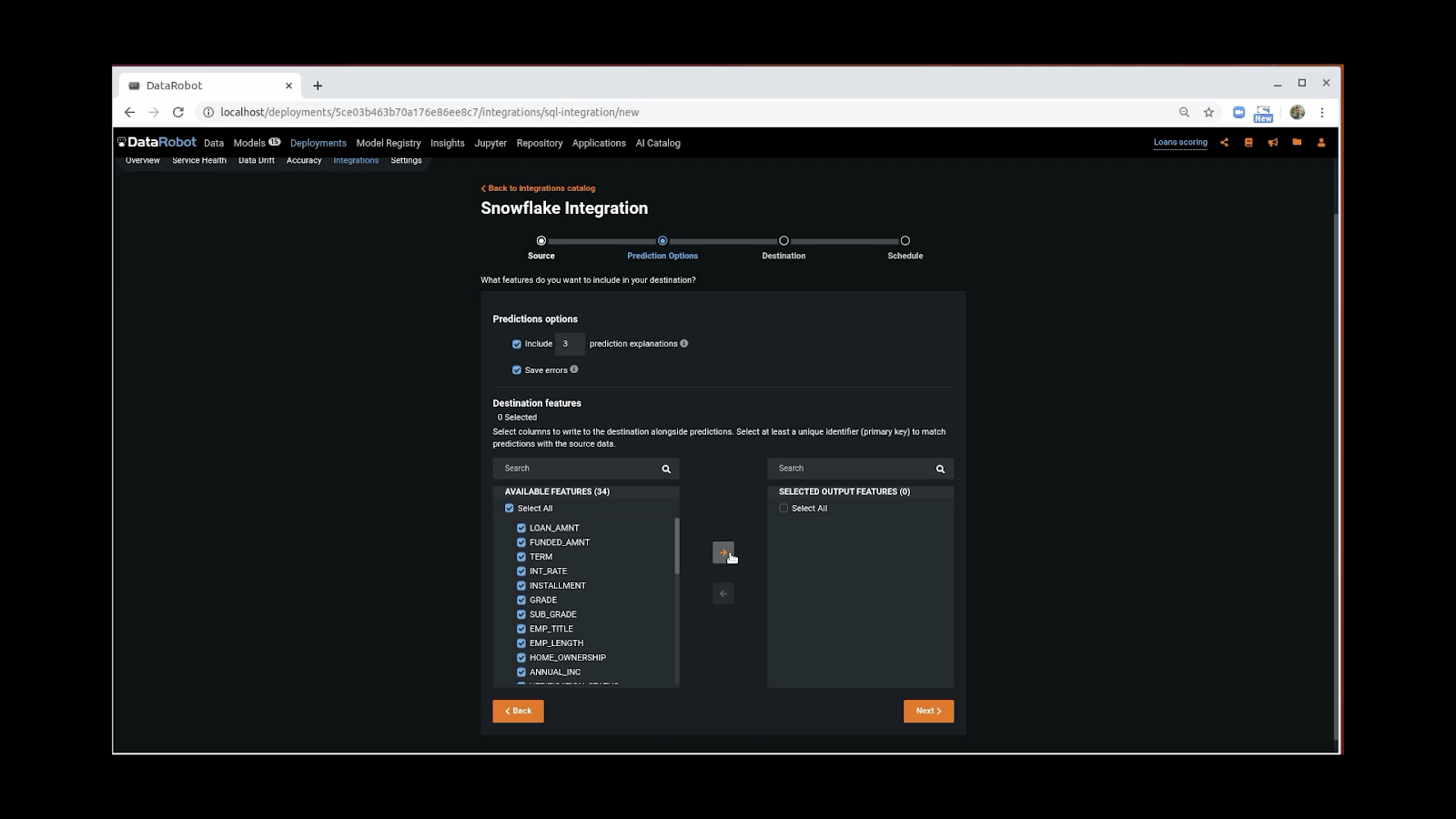

Users can then choose to include Explanations and to save any prediction error messages. Select the schema and structure and features for your output table:

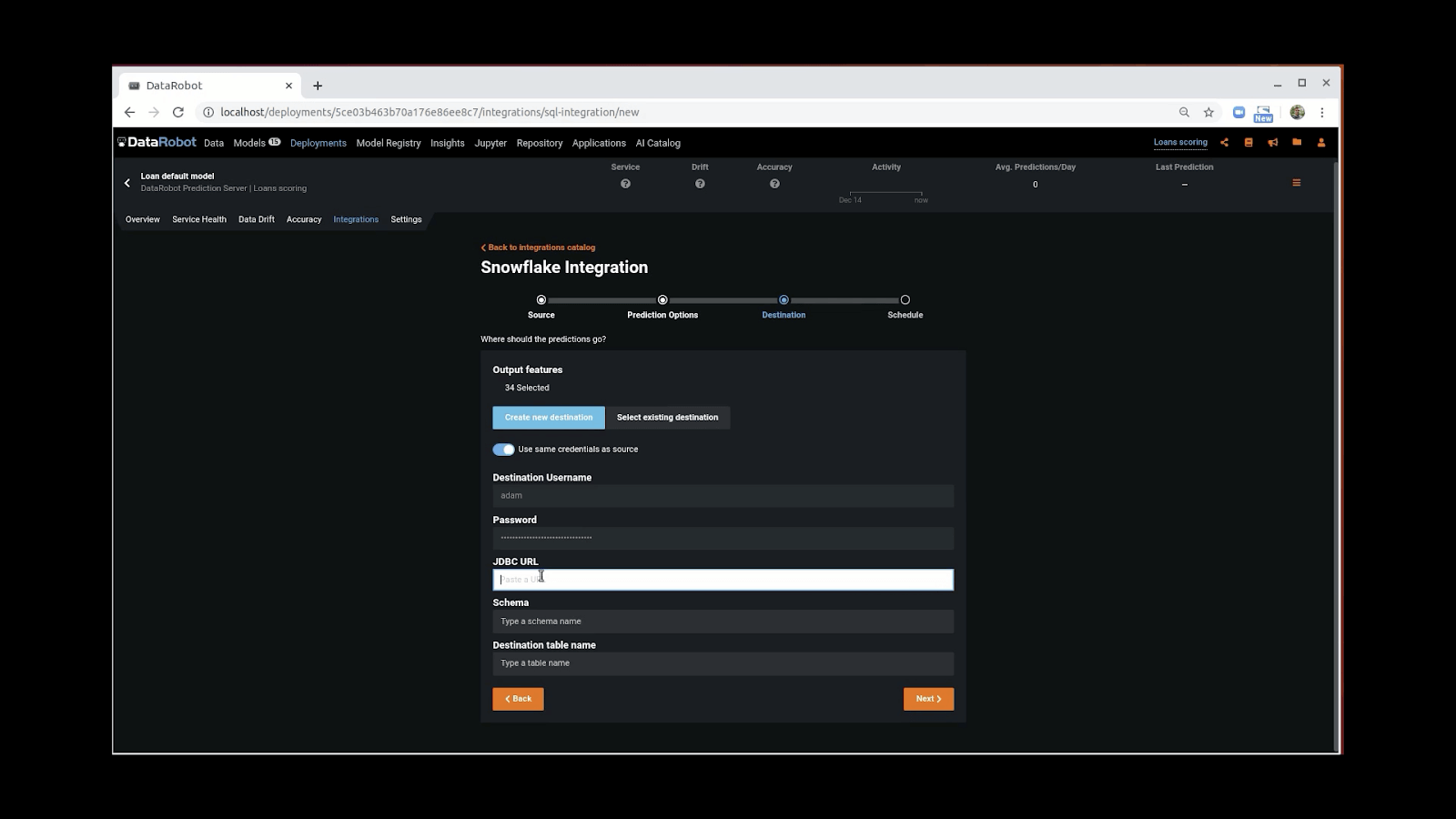

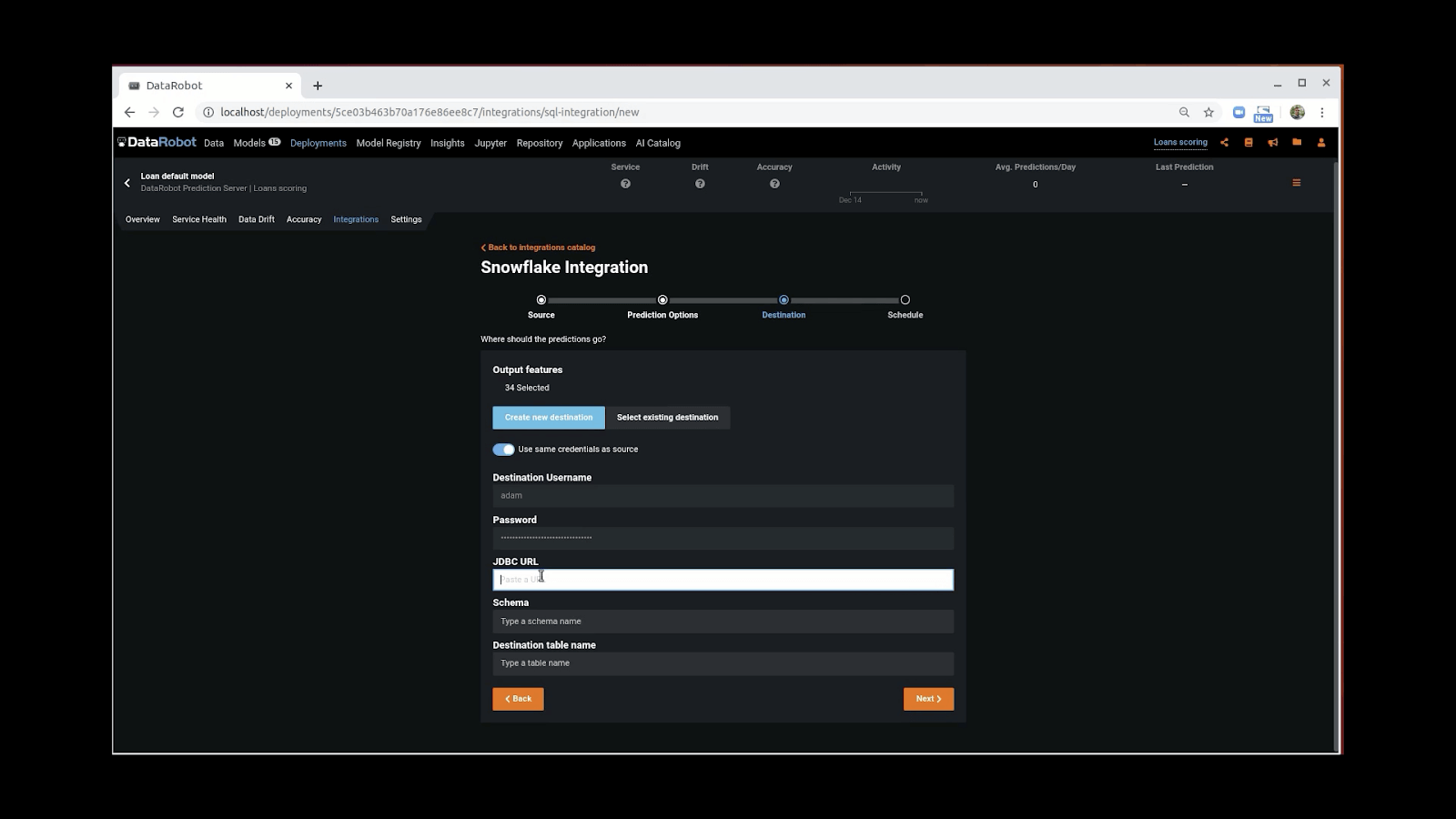

Next, simply add your Snowflake JDBC connection destination and name your table, and you’re almost finished:

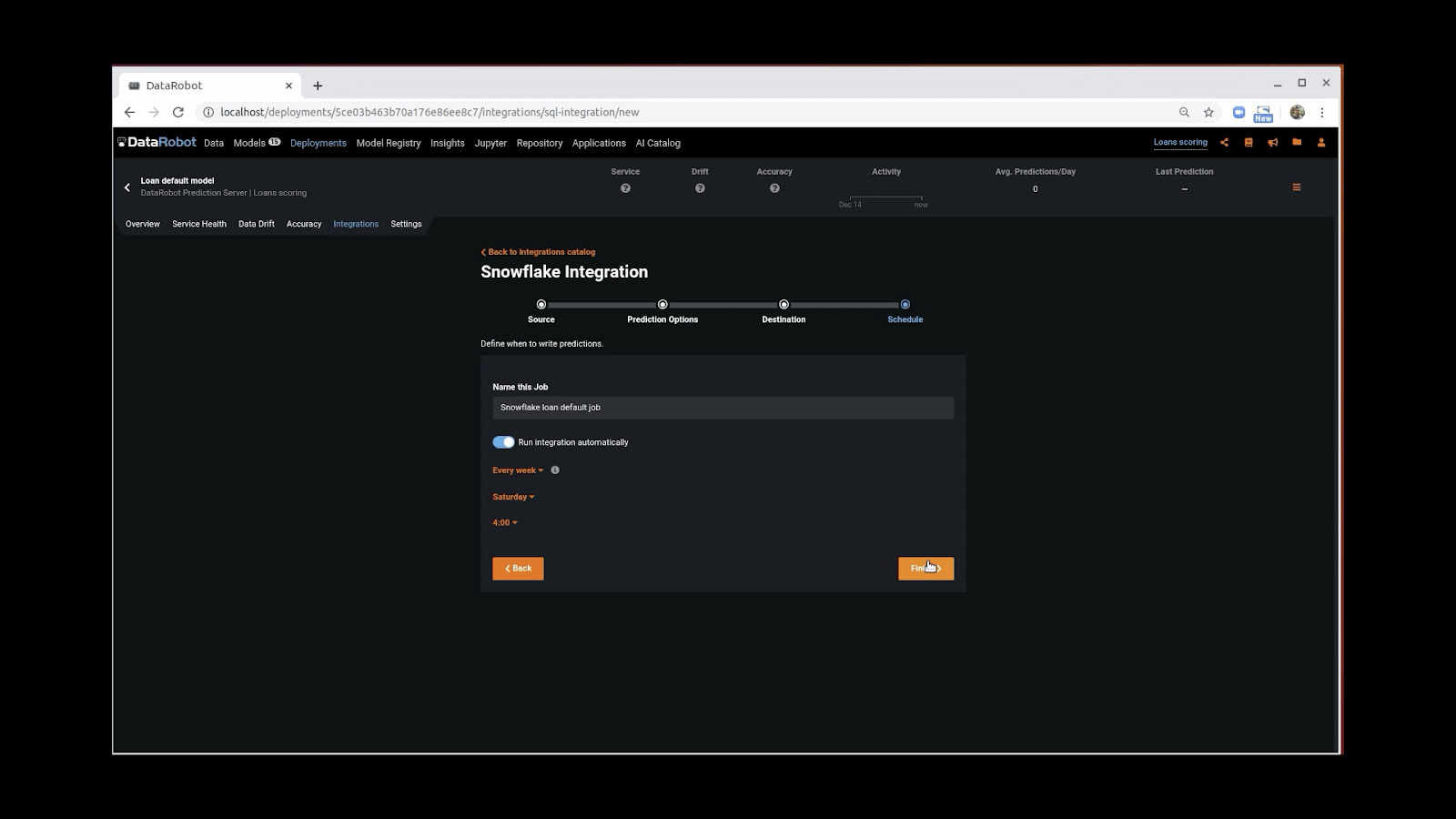

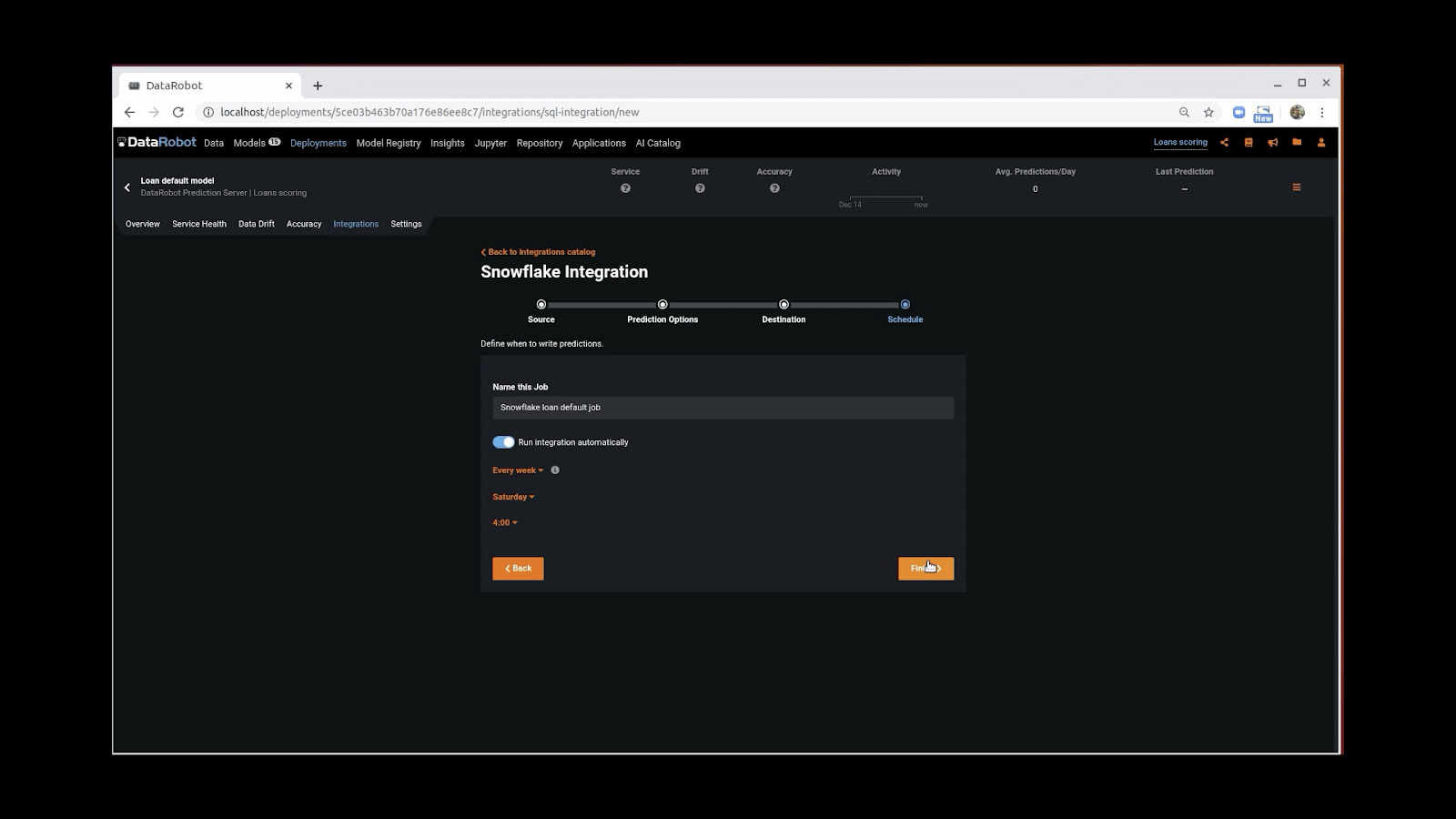

The last step is to name the Snowflake job, and set up the scheduler, if desired:

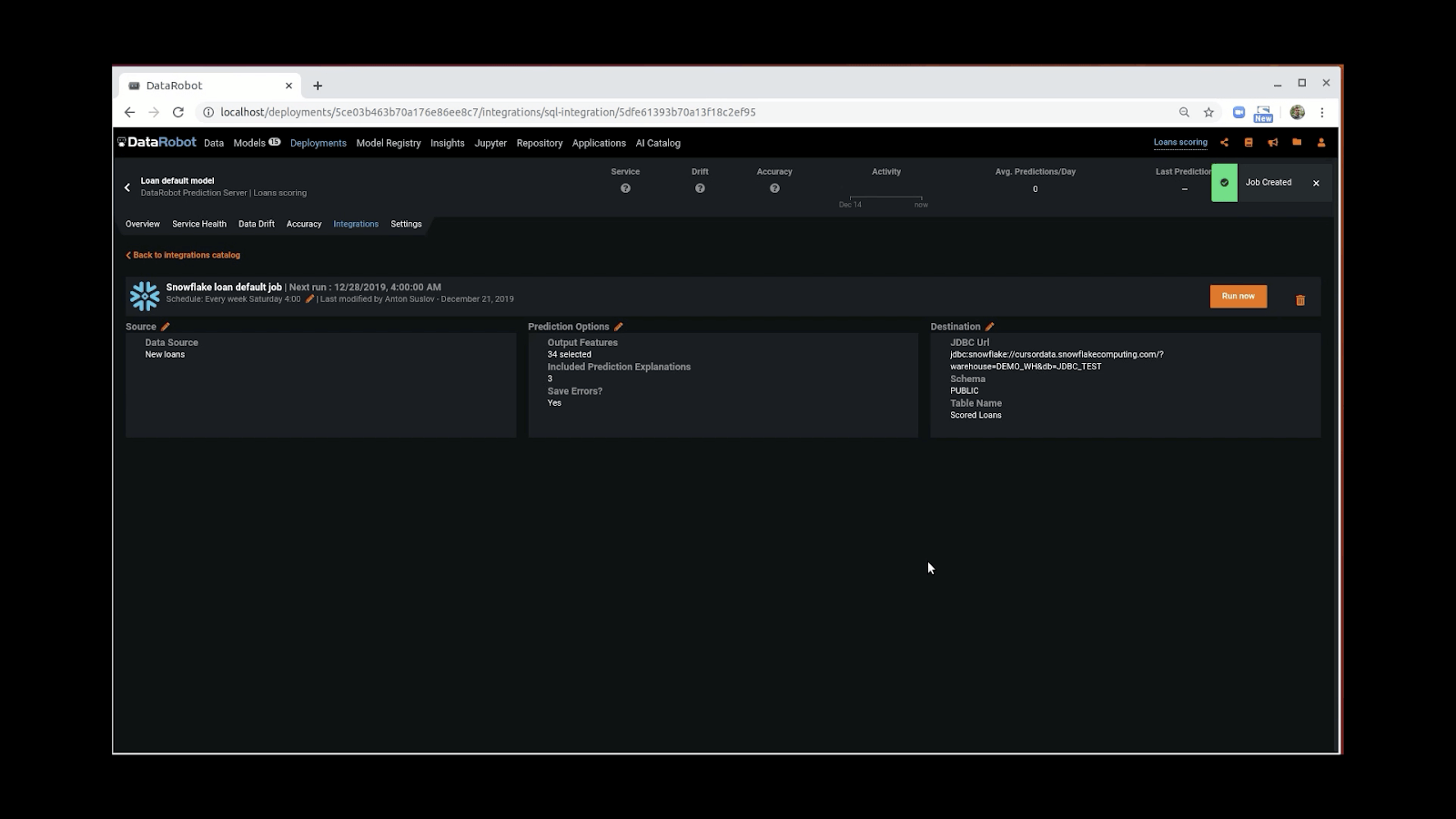

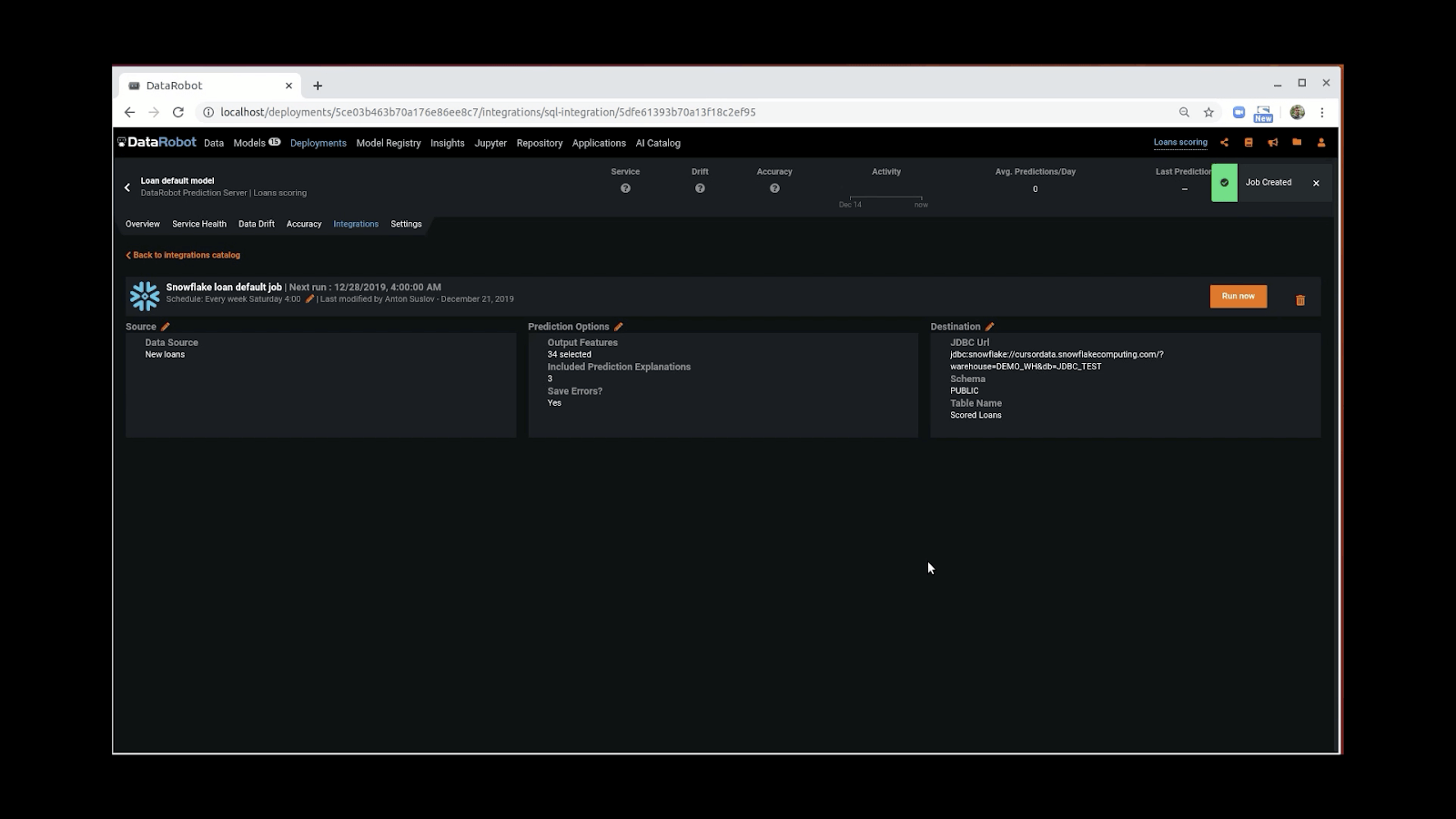

The summary screen provides information about your job, such as status and next scheduled run time, and destination:

The integration helps Snowflake keep data and analytics easily accessible in the Snowflake platform and available across an organization, improving the value of your data and enabling better-informed decision-making.

Availability

This feature is available now as a Beta release to mutual customers of DataRobot and Snowflake that use DataRobot’s Managed deployment.

We plan to gather feedback, continue to refine the user experience and then make the connection generally available in Q2 with release 6.1.

Both Snowflake and DataRobot are driven to making data and AI accessible and simple for the entire organization and accelerating time-to-value. As a result, we want to hear from you! Please share feedback about your experience with the write-back integration – your input is invaluable as work to further refine the offering

Contact your Customer Success team to gain access and put this feature to work.

About the author

Steve Eppich

Senior Director of Product Management at DataRobot

Steve is an analyst-turned-product manager with experience in building and commercializing platforms that deliver analytics and information products. Steve holds an MBA from Northeastern University.